Building AI Products Where Getting It Wrong Actually Matters

A framework for shipping AI in high-stakes environments

If you're building AI into a product where accuracy matters — legal, finance, healthcare, anything regulated — you're probably losing sleep over hallucinations.

LLMs are confident. They sound right even when they're wrong. And in some domains, "mostly right" isn't good enough. One hallucinated beneficiary designation, one invented tax rule, one fabricated citation could mean lawsuits, compliance failures, or broken trust that takes years to rebuild.

So how do you ship AI products in high-stakes environments without getting burned?

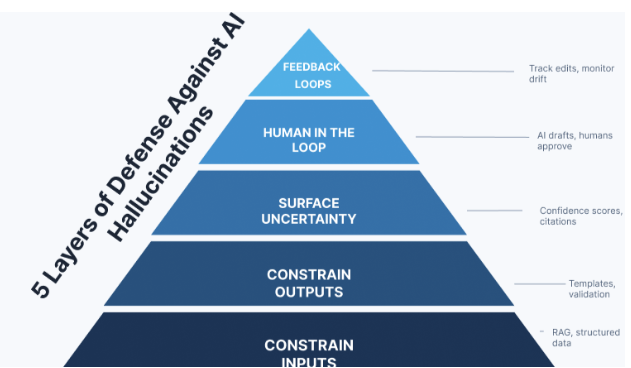

Here's the framework I think about:

1. Constrain the Inputs

Don't let the model freelance. Ground it in real data.

Use RAG (Retrieval-Augmented Generation) to pull from verified documents, not just training data. Narrow the context window to only what's relevant. Where possible, use structured data.

The less room for creativity, the less room for invention.

2. Constrain the Outputs

Generation is risky. Classification is safer.

Use templates with AI filling specific fields rather than writing open-ended prose. Prefer "pick from these options" over "write whatever you think." Validate outputs against known schemas, rules, or formats.

Think of it as guardrails, not limitations.

3. Surface Uncertainty

The worst hallucinations are the confident ones. Build systems that admit doubt.

Implement confidence scoring — even imperfect signals help. Require citations so users can verify claims. Design explicit "I don't know" pathways instead of letting the model guess.

An AI that says "I'm not sure" is more trustworthy than one that's always certain.

4. Keep Humans in the Loop

AI drafts. Humans decide.

Especially for anything legally binding or high-consequence. Tier your review process: auto-approve low-risk, flag high-risk for expert review. Make the UX clear: "this is a suggestion" not "this is the answer."

The goal isn't to slow things down — it's to put human judgment where it matters most.

5. Build Feedback Loops

Your users will catch what your evals miss.

Track where users edit or override AI outputs — those corrections are gold. Build evaluation sets and test for regressions. Monitor continuously; models and usage patterns drift over time.

Ship, learn, improve. Repeat.

The Bottom Line

The companies that win in AI won't be the ones who move fastest. They'll be the ones who figure out how to move fast and earn trust.

In high-stakes domains, that means treating hallucination as a design problem — not just a model problem.

What's your approach to building trust in AI products? I'd love to hear how others are thinking about this.